There’s no denying the increased impact of AI technologies on our society in recent years. With more and more smartphone apps, smart companions, and devices adopting AI like Cortana and Siri, EU regulators have decided to make a stand.

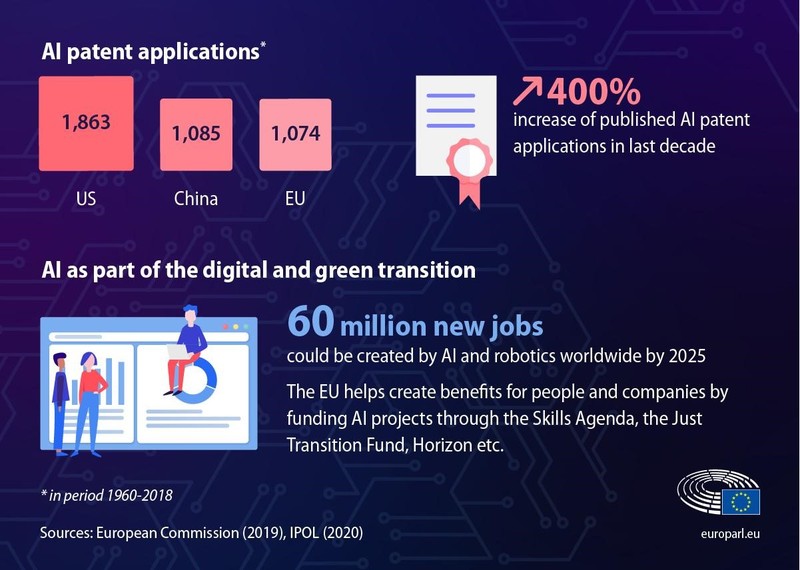

According to the findings of the European Parliament, 60 million new jobs could be created due to AI and robotics by the end of 2025. The same report suggested that there was a 400% increase in published AI patents in the last decade compared to past periods. With such a rise in the implementation and popularity of AI, the European Parliament decided to introduce a bill that would outline the use of AI.

Similar to GDPR, this new bill aims to explain exactly how AI can and cannot be used within the EU. While this may seem draconian by some businesses and executives, it is a necessary step toward regulation of artificial intelligence technologies moving forward. What exactly does this regulation entail, and how will businesses be affected by it, both in and out of the EU?

The Ethics of AI Implementation

The most important area this bill aims to address and expand upon concerns the ethics of AI use in everyday life. The legislators’ worries boil down to the fact that AI, while extremely competent and useful in some cases, cannot make up for human logic.

Implementing AI in healthcare, science, research, and other important fields can be fruitful in short term but detrimental in long term. Researchers and medical experts may be hindered by AI rather than augmented by it because it will automatize a lot of their groundwork. The legislators’ worry also extends to the fact that AI can potentially eliminate jobs as a result of high processing power and automation.

While AI-related jobs will sprout in the wake of its increased application, others will vanish as if they never existed. The first step to regulating AI moving forward would be to segment the use of AI into three stages and explain how each stage should be treated.

Breaking Down the Three Risk Stages of AI

To avoid boiling down artificial intelligence into a single group, EU lawmakers have decided to outline three separate levels of “implantation risk”. Dubbed “low”, “high”, and “unacceptable”, these risk stages should be used as reference points by businesses and research institutions in the future.

This legislation aims to allow for low-risk AI algorithms to be used as they were before, mainly in automation. However, high and unacceptable risk AI would require additional approvals and careful monitoring when implemented in the EU. This has already sparked a backlash from many businesses in the EU and out because it impedes steady progress. How exactly does each of these risk stages look like in practice, then?

-

Low Risk

Low-risk AI implementation within the EU aims to regulate AI systems such as chatbots and personal assistants. Brands should make it clear to their users when they are not speaking directly to a human being.

This is a pro-consumer move that would make it easier for people to engage with brands online. It provides a more transparent and trustworthy B2C relationship. However, it remains to be seen how well businesses take to these regulations, as they will be mandatory for any business operating in the EU.

-

High Risk

High-risk systems are those which have the potential to directly violate a user’s financial, physical, or mental wellbeing. In this instance, financial institutions with high levels of automation will be hit the most. AI which is used to parse, index, and analyze user data, often without the end-user knowing it’s happening, will have to become transparent.

This will make it much harder for businesses to run high-end AI for data analysis and customer targeting without the users’ explicit say-so. Again, at a glance, this appears to be a pro-user move by the European Parliament but its results remain open-ended for now.

-

Unacceptable Risk

China’s social score system has been at the center of controversy in Western discourse for many years, which is why this AI regulation is being pushed now. AI which represents unacceptable risk is those which treat its users as nothing more than data to be parsed. Practical applications for these AI systems include public biometric scans, public security cameras with facial recognition, and the aforementioned social scoring algorithms.

This legislation in particular aims to reduce discriminatory practices in social groups around the EU as much as possible. Exclusions do apply, however, where AI can still be used to find missing persons or help in solving crimes in the EU. When it comes to the day-to-day application of unacceptable risk AI, however, corporate entities will have no way of attaining licenses for their free application.

The legislation outlined by the EU isn’t finalized nor is it without its faults. At first glance, it is very restrictive and it will make AI development in the future far more difficult due to red tape and bureaucracy. On the other hand, it will impede unregulated AI use for any corporate entity with a stake in the EU. Until the regulations are fully finalized, however, businesses run into the issue of potentially breaking rules without knowing they’re breaking them.

EU AI Regulations Beyond the EU

Not unlike GDPR, the AI act doesn’t concern EU citizens only. Instead, any business which intends to sell products or in any way operates in the EU will have to comply with the new regulations. Whether a business is in the US, Asia, or the UK as of late, it will have to comply with the new standards without fault.

This will create further problems for UK firms and businesses which have been EU-compliant for many years before Brexit happened. With AI development moving forward rapidly, regulation compliance may become an issue depending on how far along those systems have progressed.

What will happen will innovative, disruptive new AI technologies which have now been proclaimed as unacceptable or high risk by the parliament? Do they risk high fines and potential confiscation or mandatory deletion of said technology because of its misalignment with new EU standards? Only time will tell whether there will be any grey area to speak of and how existing projects will be treated.

Corporate VS Academic Implementation of the AI Act

We’ve addressed how the new AI regulations will affect corporate entities and businesses oriented toward AI development. However, academic research fields won’t fall under the same standards in the EU moving forward.

Universities, research centers, and laboratories will fall under a different set of AI regulations given their focus on progress in a controlled environment. This also raises some concerns, however. What constitutes academic AI development under the new AI act? AI systems with seemingly harmless intent can develop to become something malicious, which would then be censored by the EU.

Only time will tell how this will be regulated compared to corporate-level AI implementation. The fact remains that the fines attached to the AI act are on the same severity level as those found in GDPR. Businesses and corporate entities which don’t comply with the regulations will face substantial fines and penalties from the EU.

What Does the Future Hold for AI in the EU? (Conclusion)

The only path forward for businesses that wish to operate in the EU is to start complying with these regulations early on. The model proposed by the European Parliament is solid, however, it is not without fault. If GDPR is anything to go by, the EU will continue to refine and tweak its AI act in the following years.

One area which isn’t in question is the users’ response to this act. End-level customers and everyday people will be happy about the AI act because it is in their interest. Knowing when and how you’re interacting with an AI in public or online is what many would consider a common courtesy.

The real question remains why businesses are so keen on keeping that fact private. After all, businesses are run by people at the end of the day, and without regulated AI use, we risk the technology becoming too rampant. If that should happen, it would be difficult to reign in corporate entities who’ve used AI without any form of regulation. From this point of view, EU AI regulations are a net positive for everyone.